Earlier this month, The Washington Post looked under the hood of some of the artificial intelligence systems that power increasingly popular chatbots. These bots answer questions about a vast array of topics, engage in a conversation and even generate a complex — though not necessarily accurate — academic paper.

To “instruct” English-language AIs, called large language models (LLMs), companies feed the LLMs enormous amounts of data collected from across the web, ranging from Wikipedia to news sites to video game forums. The Post’s investigation found that the news sites in Google’s C4 data set, which has been used to instruct high-profile LLMs like Facebook’s LlaMa and Google’s own T5, include not only content from major newspapers, but also from far-right sites like Breitbart and VDare.

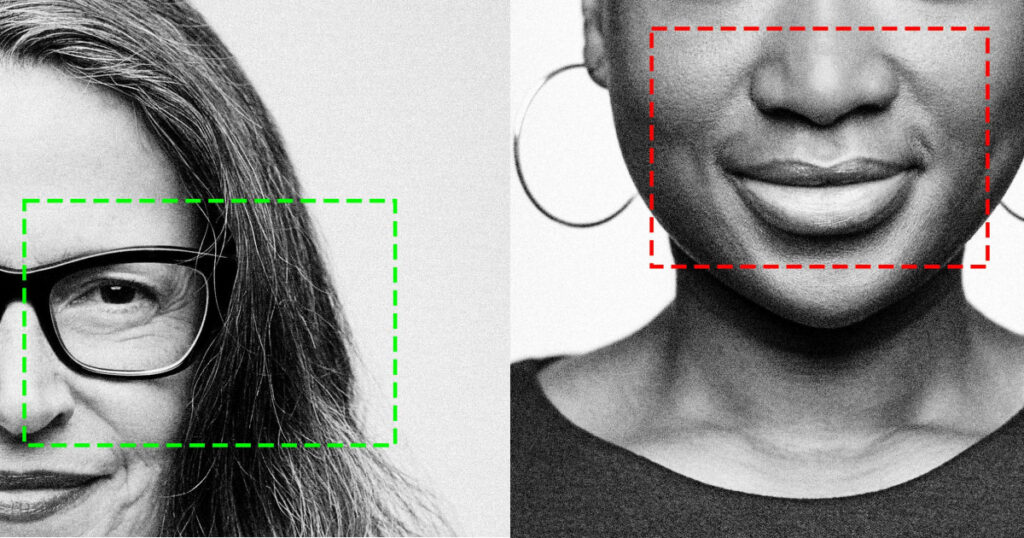

For computer scientist and journalist Meredith Broussard, author of the new book “More Than A Glitch: Confronting Race, Gender, and Ability Bias in Tech,” the Post’s findings are both deeply troubling and business as usual. “All of the preexisting social problems are reflected in the training data used to train AI systems,” she said. “The real error is assuming the AI is doing something better than humans. It’s simply not true.”

There has been an explosion of interest in chatbots since the release of OpenAI’s ChatGPT last year. People have reported using ChatGPT to help with a growing list of tasks and activities, including homework, gardening, coding, gaming, writing and editing. New York Times columnist Farhad Manjoo reported it has changed the way he and other journalists do their work, but warned they need to proceed with caution. “ChatGPT and other chatbots are known to make stuff up or otherwise spew out incorrect information,” he wrote. “They’re also black boxes. Not even ChatGPT’s creators fully know why it suggests some ideas over others, or which way its biases run, or the myriad other ways it may screw up.”

But Broussard points…

Read the full article here